When the spotlight is on AI Impact, where do you see those impacted?

The very people whose data trains AI systems, whose labour refines them, whose lives are shaped by them, are usually left with little to no say in how AI is designed or governed. The citizen is often left out of the loop as business and government join hands creating an increasingly steep power asymmetry. At the Participatory AI Research Symposium (PAIRS), I got a glimpse of what the missing link could look like.

Following the first edition alongside the Paris AI Action Summit in February 2025, PAIRS 2026 took place as an independent side-event of the India AI Impact Summit. While the theme of the India AI Impact Summit heralded ‘People, Planet and Progress’, at PAIRS the people-centric dialogue flowed. Backed by research and grounded in practice, PAIRS brought the voice of the people into the conversation and tipped the imbalanced scales in their favour through its tracks of Participatory AI Development, Governance, Power, and Negotiation. As Generative AI learns from people at every stage, it is only fitting to study it through a participatory, people-centric lenses.

The co-chairs, Tim Davies and Astha Kapoor, curated a diverse range of work across contexts, disciplines, and methods keeping in touch with pain points of people worldwide. From co-developing AI tools with the Marwari communities, to using Theatre of the Oppressed as a tool for AI literacy, the synchronicity between these studies was both illuminating and unsettling with regards to the trajectory of AI evolution and diffusion.

PAIRS also put in perspective for me our work at CDF. Through our Youth Dialogue Forum series, we bring young people together to have a say in the technologies shaping their lives. Watching participatory approaches unfold at PAIRS reiterated for me the significance of these conversations in laying the groundwork for meaningful change. The insights from these discussions reveal what metrics fail to capture, going on to inform our recommendations to government and industry, and shape our on-ground interventions.

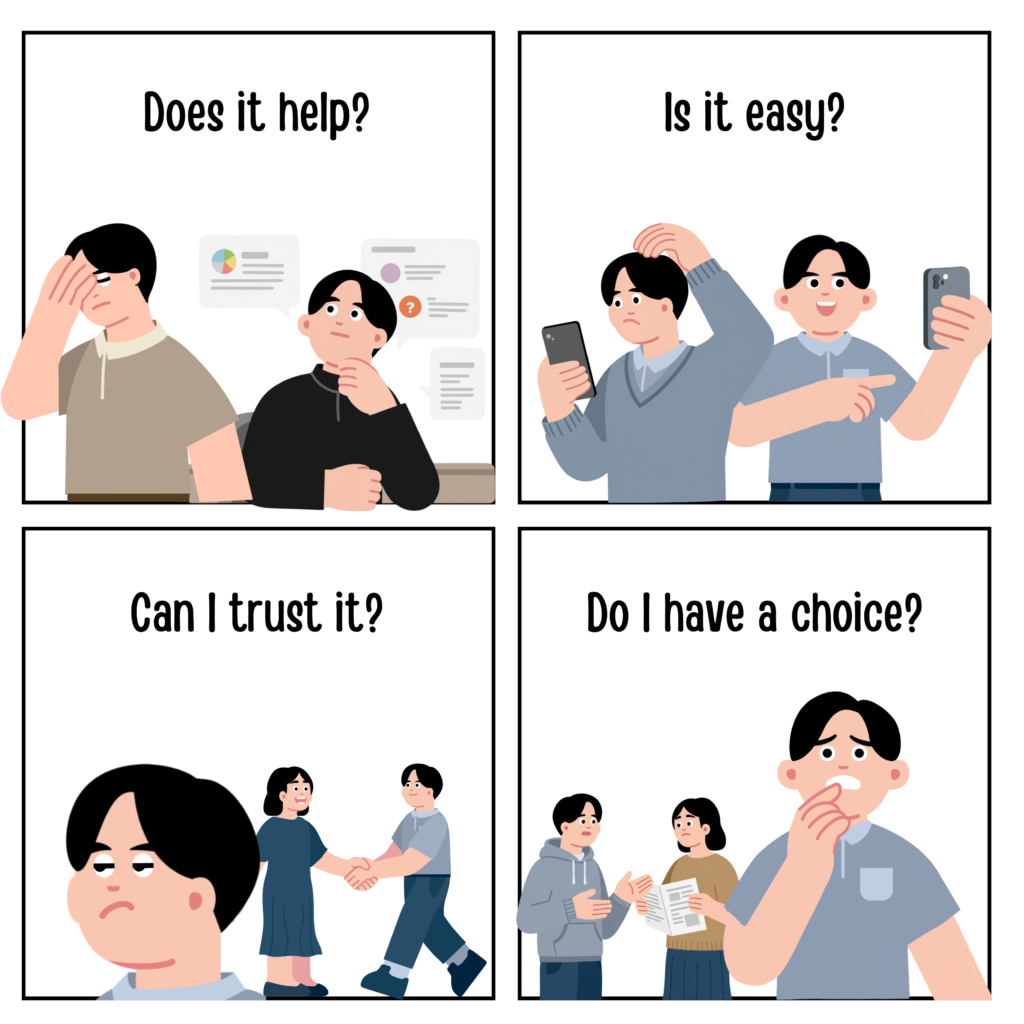

The YDF we held in August 2025 fed directly into the work I presented at PAIRS. While GenAI’s record adoption rate dominates the narrative, we were more interested in what cannot be measured or quantified. We took a narrative approach with this still-nascent technology, moving beyond technology acceptance models to examining AI through the lens of domestication or the process by which users make sense of a new technology and integrate it in everyday life.

Initial reckonings centred on traditional technology acceptance questions of perceived usefulness and ease of use. But with generative AI, other factors of trust, anthropomorphism and user agency come into play. Unlike its predecessors, GenAI simulates human conversation and connection and elicits emotional responses. In the Indian context, where technology is tied to modernity, opportunity, employability, and social mobility, opting out is not a neutral act. For consent to be meaningful, it must be informed and uncoerced. The same applies to user agency and participation.

So, we looked beyond the binary of acceptance or rejection and into the nuances of user experience.

Here’s what we found.

High usage, low trust

GenAI usage was widespread and frequent, especially for writing assistance and brainstorming with emerging cases of emotional support. Convenience and accessibility drove continued engagement. However, this coexisted with scepticism. Distrust stemmed from negative experiences, general caution toward new technologies, and a fairly high awareness of risks and limitations including bias, hallucinations and sycophancy. Notably, distrust did not lead to disengagement, but adaptation.

Negotiated use and control

Young users managed their trust through a more cautious or controlled use like restricting GenAI use to low-stakes tasks. In an effort to establish a sense of control and justify continued usage, AI was framed as a catalyst and not a substitute for human labour, whether cognitive or emotional.

Human agency was emphasised, maintaining that the tech is neutral and it is people who choose to use or abuse it. This narrative echoes tech leaders at the summit, delegating responsibility back to the users. The question remains whether users always have the power to resist, whether the tech is designed in a way that further incapacitates them. Yet our participants reiterated that it is up to users to exert their autonomy to overcome reliance on the tech.

Tool or social actor

A shift was emerging from functional use to emotional support. As a linguist, I was fascinated by the telling Freudian slips. Referring to ChatGPT as “he,” then correcting to “it.” Describing it as “someone” you go to. Personifying language and humanising attributes, describing it as a friend, therapist, mentor or understanding, supportive and empathetic. Its appeal lay in availability, non-judgement, personalisation. It offered low-risk, low-effort social interaction, less demanding than the real deal. Yet participants were quick to clarify this was a temporary, supplementary solution in vulnerable situations.

Ambivalence and resistance

With adoption came ambivalence. Some expressed discomfort with their reliance, fears of cognitive decline, or a sense of diminished autonomy. The costs of continued usage was a moral conflict arising from choosing to engage with this tech while aware of ethical reasons not to.

Overall, what stood out to me was:

a) There is a high awareness among users about the limitations and ethical concerns of this new tech.

b) They continue to make conscious trade-offs for the convenience and companionship provided by this tech.

c) They are not just passive adopters of this tech, but critically engage with it while negotiating their participation.

Seeing similar negotiations emerge across studies at PAIRS, whether it is school children in the UK articulating their curiosity and scepticism, Hollywood film production workers in the US balancing automation and job security, queer communities in India grappling with safety and surveillance, connected the dots within a broader global phenomenon with AI.

Thus, grounding AI discourse in lived experiences rather than conjecture, PAIRS made tangible what can otherwise feel siloed or abstract. Bottom-up participation informed by user experiences can move participatory discourse beyond token consultation and shape AI policy, literacy and design. I left Delhi with renewed faith in the global efforts that put people over profit, translating into real tangible change. If these conversations continue to grow, perhaps they will begin to seep into the mainstream and bigger stages too.

Check out the poster we presented at PAIRS here.